By Jennifer Kiilerich

In several Tennessee high school classrooms, computer science students are stepping away from their screens. Instead, as part of a learning tool Vanderbilt University researchers are developing, the teens are actively moving around their environments, enacting algorithms and processes, asking questions and collaborating on solutions.

It’s not often that coding lessons become physical, but GEM-STEP (Generalized Embodied Modeling with Science through Technology Enhanced Play) makes science personal, fun, and—researchers believe—more effective.

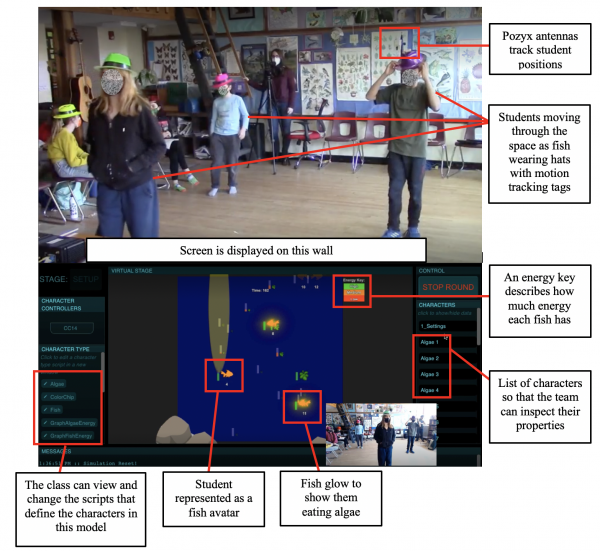

The tool’s first generation was launched some 15 years ago when Professor Noel Enyedy envisioned STEP as a new way to grab students’ imaginations. His mixed-reality lessons synced trackers on kids’ hats with avatars on a screen, allowing them to act out science. Instead of being lectured about states of matter, they could become a water molecule.

“We wanted to do things differently in science classes. We considered how kids could learn at a deeper level and come to love the subject matter,” said Enyedy, professor of teaching and learning at Vanderbilt Peabody College of education and human development.

Since then, he and his colleagues have brought STEP to countless classrooms, updating it in tandem with technological advances. The latest version, GEM-STEP, incorporates AI and is supported by an interdisciplinary team from across Vanderbilt, including Enyedy, Gautam Biswas, Cornelius Vanderbilt Professor of Engineering, computer science Ph.D. student Joyce Fonteles, teaching and learning Ph.D. student Efrat Ayalon and others. It’s being piloted this academic year in schools, following a successful test run with teens in the School for Science and Math at Vanderbilt, a program that gives Metro Nashville Public Schools students experiential learning on Vanderbilt’s campus.

The researchers connected with one another through Peabody College’s LIVE Learning Innovation Incubator, and the work has been funded by a series of federal grants, including a $2.8 million, five-year award from the National Science Foundation that concluded in 2024. The current project is supported by EngageAI, a multistate network of educational institutions and other partners supported by a $20 million grant from the National Science Foundation. Professor Biswas is a co-PI. Members of the institute conduct research on narrative-centered learning technologies, adaptive collaborative learning, and multimodal learning analytics to create engaging, collaborative, story-based learning experiences.

Findings from Enyedy’s initial studies highlight the importance of students learning playfully and actively, with their full bodies. Embodied learning, he noted, draws all types of students into science, making STEM widely accessible.

Biswas agreed: “To get kids interested in science, you have to connect it to the real world. Embodiment makes you think as you act,” he said.

EMBODIED LEARNING: BIG IDEA, BIG IMPACT

Despite its tech focus, Enyedy’s inspiration for STEP didn’t come from a science lab. At the time, he worked at UCLA: “I was hanging out with some folks in the theater department,” he said, “and they were using tracking technology so that the actors could control their own lighting and sound on stage.”

He wondered: Why not apply that same technology to the classroom? So, Enyedy’s team designed STEP to replicate natural phenomena, allowing learners to embody specific roles by wearing tracking tags (like those used in mobile devices) that sync to the lesson’s scientific models.

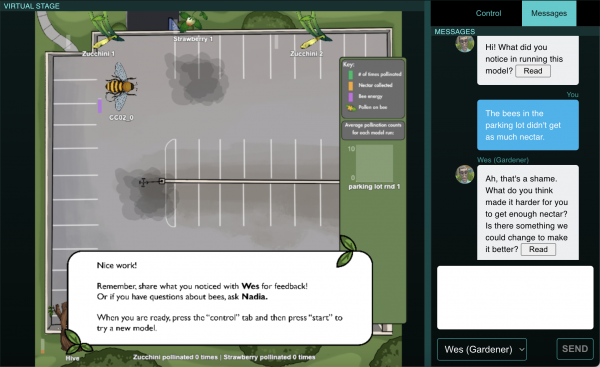

To learn about states of matter, for example, first graders hold still and spread out at precise distances, and a screen correlating with their trackers shows that they have formed ice—green dots frozen in place. “If they run around the room, they quickly learn they can form gas,” said Enyedy, which turns their dots red. Moving slowly creates liquid, blue on the screen. In another model, kids play the role of bees, running from flower to flower to pollinate them.

Research shows that for students, this simple act of perspective-taking leads to improved engagement and creativity, and stronger academic outcomes and social skills.

“Role-taking allows students to identify with the first-person perspective. Kids are so engaged by these types of lessons,” said Enyedy, “not only because they are playing, but because of that empathy. They can imagine what the bee they are acting out might be feeling.”

So far, he and his team have built 10 study units, which have been embraced by teachers and students. One class carried on the lesson long after it was done, building a paper mâché pollinator habitat and pretending to be bees on the playground for months, said Enyedy.

“It allows them to see computer science in a different way.”- Joey Grassi

Joey Grassi, whose computer science class at STEM Prep Academy in Nashville is using GEM-STEP, the AI version of the tool, said his students have benefitted: “It allows them to see computer science in a different way.”

HOW AI BOOSTS RESPONSIVENESS

Engineering professor Biswas, along with Fonteles and Ayalon, teamed up with Enyedy to adapt the STEP model for a new way to teach computing to teens. Where the original STEP lessons were pre-built, GEM-STEP uses AI that lets students interact with and reshape their learning modules in real time.

Cross-disciplinary collaboration is apparent at every phase of development: Education researchers start by defining the learning goals and the behavioral indicators that show how students are responding, such as body language and facial expressions. Then computer scientists turn those indicators into code and scalable validation methods, while the computer science researchers build classroom-ready sensing systems, like cameras and audio, and synchronize the data collection process to make everything work together seamlessly.

In one project, students role-play as DJs organizing songs for a party. Each learner wears a tracker synced to a song, and their on-screen avatars are projected at the front of the classroom. As their movements and interactions change the size and position of their avatars, the students gradually realize that they have been sorted by Spotify rankings and, using these visual clues, line up from the top-ranked song at the front of the room to the lowest-ranked at the back.

At the end of the activity, the AI tool reveals the sorting algorithm behind the song rankings, helping students connect their physical experience to the underlying computing and data science concepts. This process, said Grassi, the high school teacher whose class is using GEM-STEP, encourages students “to work collaboratively without putting too much social pressure on them to have to share verbally. It also helps them to see and experience the sorting algorithm executed physically instead of just having to look through code.”

“We want to provide a more expansive way for kids to interact with these technologies.” – Joyce Fonteles

“We want to provide a more expansive way for kids to interact with these technologies,” said Fonteles. “Because later on, that will help them think outside of the box.”

GEM-STEP can support these activities thanks to a multimodal setup, meaning its AI system combines information from several sources, including tracking sensors, classroom video, computer vision (AI that interprets the video stream), system logs and speech recordings. The team’s software platform, Syncflow, aligns video, audio and computer logs so that researchers can study these data streams in a synchronized, integrated way to understand students’ learning behaviors and, sometimes, the difficulties they have in understanding and enacting a concept. “Not many people have developed this kind of software that works in classroom environments,” said Biswas.

With this capability, the researchers anticipate that GEM-STEP will be able to make real-time observations about behavior and learning. For example, if no one visits a certain corner of the room, or if every group clusters around the same digital “flower” in a bees unit, the AI could use its knowledge of the learning simulation to infer what is happening and then suggest new challenges to keep exploration going.

Looking ahead, the team envisions responsive AI that can also guide students working in small groups through code development, asking tailored, critical-thinking questions that a single teacher might struggle to pose to every student in a large class. Making computer science physical, social and collaborative in this way can help draw in students who may have felt that coding was not for them.

“Not everyone learns the same way,” said Fonteles. “For some people it can be very cool to sit in front of a computer and start programming, but others are very reticent to go there. They are afraid of making a mistake.” The playful experience of embodiment, she added, can help kids learn by reducing anxiety around technology.

The students also value “the applicability of what they are learning outside of computer science and outside of this specific activity,” said Ayalon.

A CLASSROOM TOOL FOR THE FUTURE

The team continues to work collaboratively with teachers and students to gather feedback and design AI that augments science class for both learners and teachers, keeping “the human in the loop” in what Biswas describes as a hybrid intelligence approach.

When humans stay in the loop, they can use technology to boost empathy and understanding: If kids can become the bee, the music or a molecule, science is no longer abstract. “This is a way of teaching that invites more people in, and helps them feel like they can belong,” said Enyedy.

Noel Enyedy is a professor in the Peabody College Department of Teaching and Learning, where future and current educators can explore innovative ideas alongside research-driven teaching and learning tools.

Portions of this article originally appeared in October 2025.